Global average temperatures were the hottest in 1400 years in the 20th century, more specifically during the period 1971-2000, according to a first-of-its-kind scientific study. The study, conducted by a team of 78 climate researchers in 24 countries, helps break new ground in climate science in that the team compiled direct and proxy data from a range of sources to reconstruct 2000 years of temperature change for seven continental-scale regions. The global warming trend they detected, which began in the late 19th and accelerated over the course of the 20th century, is in stark contrast to, and reverses, a long-term cooling trend that lasted well over 1000 years.

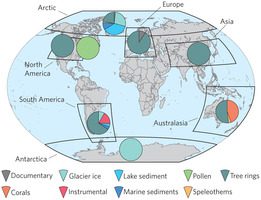

Reconstructing climate change across seven continental-scale regions over the past 2000 years, the researchers drew on direct observations of temperature, as well as a variety of proxy data that included ice and coral reef cores, tree-ring measurements, pollen and lake sediment sampling. The study, “Continental-scale temperature variability during the past two millennia,” was published in the current issue of Nature Geoscience.

1971-2000: the hottest period in last 1400 years

The primary motivation for the team of 78 researchers was to add to our understanding of climate change by reconstructing 2000 years at the regional, continental scale, a blind spot and weakness of scientific understanding to date. Even though they did not try to attribute the abrupt reversal from a cooling to a warming trend to human or natural factors, the results add yet more evidence supporting the widely and generally accepted assertion that human-caused (anthropogenic) emissions of greenhouse gases since the beginning of the Industrial Age have driven an intensification of the Greenhouse Effect.

While climate change prior to the late 19th century was driven primarily by natural forces – e.g. cyclical changes in the Earth’s orbit around the Sun and its precession, variations in solar activity and volcanic eruptions – they are not sufficient to account for the dramatic reversal and rise of global average temperature registered in the 20th century, according to the report authors.

“The pre-industrial trend was likely caused by natural factors that continued to operate through the 20th century, making 20th century warming more difficult to explain if not for the likely impact of increased greenhouse gases,” lead co-author Darrell Kaufman, a Regents’ professor at Northern Arizona University, was quoted in a Phys.org report.

According to Dr. Steven Phipps, another co-author from the University of New South Wales’s (NSW) ARC Centre of Excellence for Climate System Science,

“The striking feature about the sudden rise in 20th century global average temperature is that it comes after an overall cooling trend that lasted more than a millennium. This research shows that in just a century the Earth has reversed 1400 years of cooling.”

First-of-its-kind study on regional climate change

The inability to reconstruct climate change at regional scales has been a blind spot and weakness of climate science research to date. The ability to do so not only enhances our understanding of past changes in climate, but will enhance the ability of climate scientists to better model and forecast climate change in the present and future.

“Climate change at that scale is more relevant to societies and ecosystems than global averages,” Kaufman elaborated. “Regional-scale differences help us to understand how the climate system works, and that information helps to improve the models used to project future climate.”

Examining the data, the research team found that temperature varied more uniformly within continental-scale regions than between them. While the evidence shows the recent reversal and shift to a warming trend has been experienced globally, other major, well-known climate events of the past, such as the Medieval Warm Period and Little Ice Age, were more localized and regional in their geographic extent.